A system may perform well in simulation or under idealized steady-state assumptions, but its practical value is determined by how it behaves when exposed to real pipeline dynamics, measurement uncertainty, transient operations, and controlled loss events.

That’s why we recently put our gas leak detection model to the test at Southwest Research Institute (SwRI), on their High-Pressure Loop at the Metering Research Facility. It gave us a chance to evaluate our system in a controlled, instrumented, high-pressure natural gas environment — one designed to replicate the conditions that make transmission pipeline leak detection hard.

That’s why we recently put our gas leak detection model to the test at Southwest Research Institute (SwRI), on their High-Pressure Loop at the Metering Research Facility. It gave us a chance to evaluate our system in a controlled, instrumented, high-pressure natural gas environment — one designed to replicate the conditions that make transmission pipeline leak detection hard.

Why testing at SwRI mattered

Gas behaves differently than liquids. Unlike nearly incompressible liquids, gas expands and contracts — meaning temperature, pressure, and flow velocity all interact and affect how much gas is physically in the pipe at any given moment. As pressure gradients shift, so does gas density, which means line inventory is constantly changing.

This makes mass-balance and pressure-profile leak detection especially tricky. Normal pipeline activity — flow ramps, pressure swings, line-pack changes — can produce imbalance signals that look a lot like a leak. The real challenge isn’t just comparing inlet flow to outlet flow. It’s distinguishing normal compressible-flow behavior from an actual sustained loss, even when the operating variables are messy and hard to separate.

That’s exactly why physical validation matters.

SwRI’s High-Pressure Loop is a controlled, high-accuracy natural gas test environment that can replicate many aspects of real transmission pipeline operation. It supports generating and measuring leaks at multiple locations — making it a strong setting for testing detection performance under a variety of gas-flow conditions.

What we tested

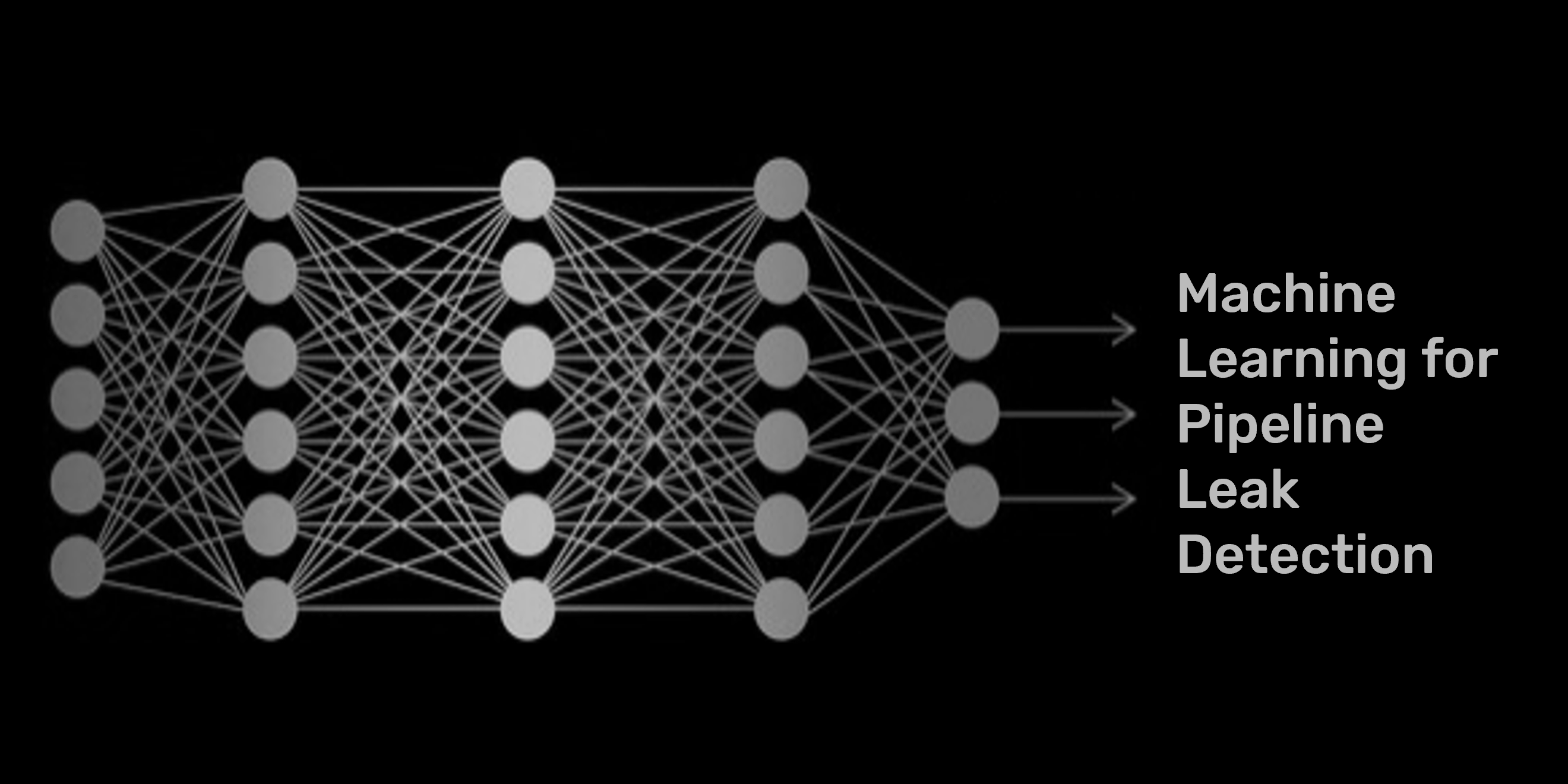

We ran our machine-learning-augmented detection framework across a range of leak sizes, leak locations, and operating conditions.

Equally important, we also ran non-leak baseline cases: steady-state holds, flow ramps, flow step changes, pressure ramps, and mixed transient events. A detection system’s reliability isn’t just about catching leaks — it’s also about staying quiet when there’s nothing to alarm on. Operators won’t trust a system that false alarms during routine operations.

What the results showed

Across 50 controlled leak tests, the framework detected 46.

The four missed detections occurred within the same difficult operating regime: very small leaks at or below 0.1 MMSCFD introduced during large simultaneous flow and pressure ramps. In these cases, the operating transient produced imbalance behavior larger than the leak-induced signal, increasing model uncertainty and reducing leak observability.

Across the documented non-leak baseline cases, the framework generated zero false alarms.

That result is meaningful for two reasons. First, it demonstrates strong detection coverage across a broad set of controlled leak conditions. Second, it helps identify the practical lower boundary of detectability when small leaks are introduced during highly dynamic operating changes. Operators need to know where performance is solid, where uncertainty grows, and where the physics limit what’s detectable. These results give us — and our customers — a clear, honest picture of both.

Why this approach is different

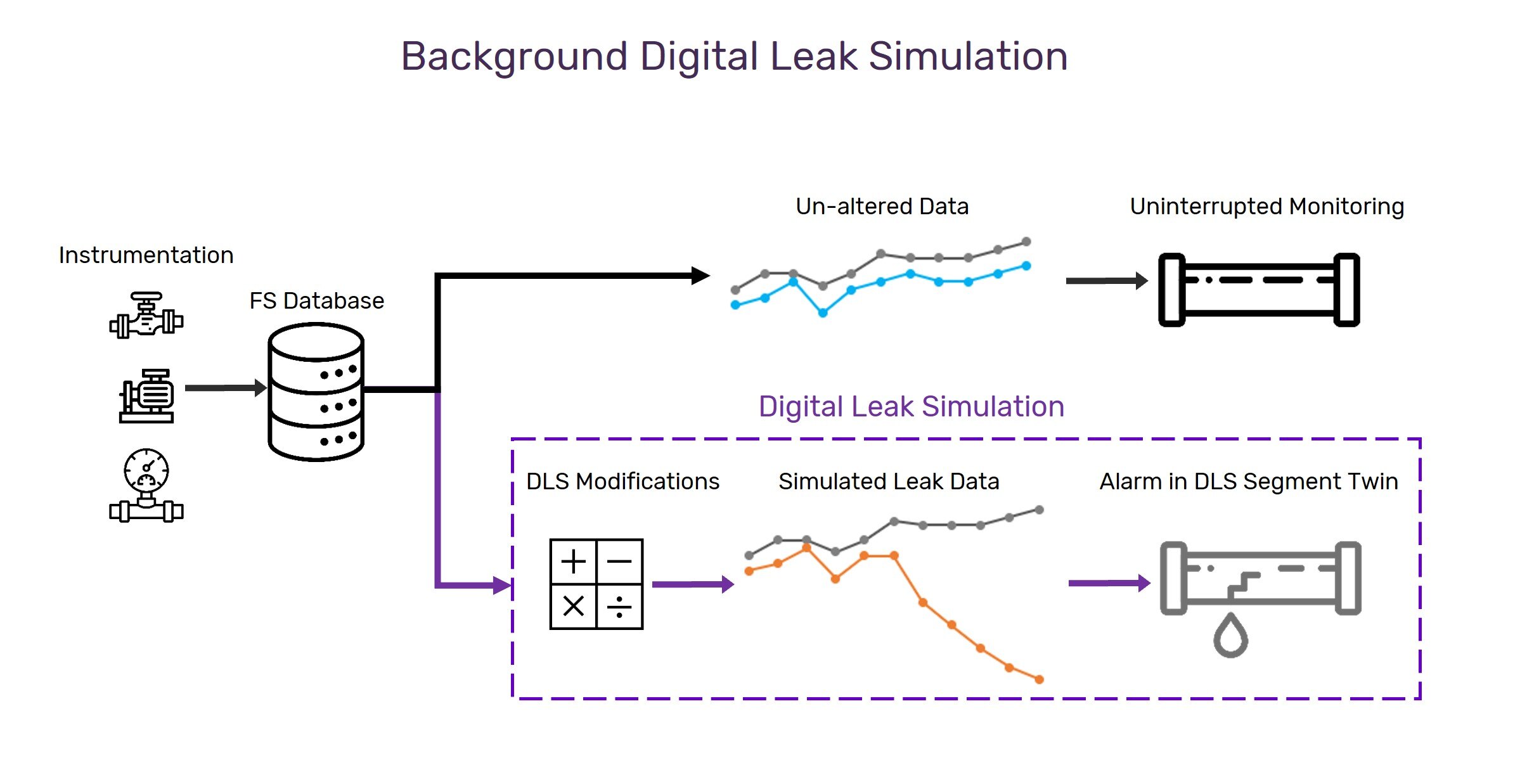

Our system uses machine learning models that are grounded in first-principles physics, rather than trained purely on historical leak patterns. The goal isn’t to memorize every possible operating condition — it’s to give the model enough physical structure to understand how inventory, pressure, flow, and thermal behavior relate across a wide range of conditions.

Pipeline systems don’t operate in a small, predictable envelope. Flow rates change, pressures shift, line-pack evolves, and transients can dominate the measured imbalance. A purely data-driven model needs representative data from every one of those regimes to generalize reliably. A fully physics-based model requires significant engineering effort to build and maintain for each system. Our hybrid approach threads that needle: physics provides the structure, machine learning adapts it to each pipeline’s actual behavior.

The result is faster deployment than building a detailed physics model from scratch, and better generalization than pure pattern matching. For operators, it means the system can be set up and adapted with less manual effort — while still accounting for the gas-flow mechanics that drive real pipeline behavior.

Why being there in person mattered

Why being there in person mattered

There’s a real difference between reviewing test data afterward and watching the system respond as conditions change in real time. Being on site reinforced something important: validation isn’t a documentation exercise. It’s the process of putting a detection framework through controlled physical conditions, with third-party oversight, under operational scenarios that genuinely stress the model’s assumptions. That’s what gives us — and pipeline operators — confidence in what the system can and can’t do. It provides evidence that can guide the path toward dependable field deployment.

What comes next

SwRI was an important step, but not the finish line. We’re continuing to refine the framework, expand testing across additional operating conditions, and apply what we learned from this validation work to more complex field environments. For us, this milestone represents progress toward a more reliable and physically grounded approach to gas pipeline leak detection.

More to come!

Comments

Add Comment